An insurance firm based out of the US invests in property catastrophe reinsurance and the retrocession market. The current initiative of their analytics team involves web scraping various weather websites to better understand the impact on the loss ratio and use this information for loss reserving in the future.

They engaged Digital Alpha to build a custom weather data product that has an automated and scalable approach to data extraction with a uniform approach to data ingestion.

The Challenge

At present, the analytics team of the firm uses python to extract data from events and export them to spreadsheet/excel. The critical challenges in this process are:

- Manual approach to data extraction: There are approx. 1300 Japanese weather data sites from which analysts extract information using python and export it to an excel/database. Once the data is dumped into one worksheet, the information is consolidated in one table. All this takes a lot of time and manual intervention.

- Lack of real-time insight: Since the data extraction is a manual process, if a site changes, the data is not updated in real-time, making it an unreliable source for decision-making.

Solution

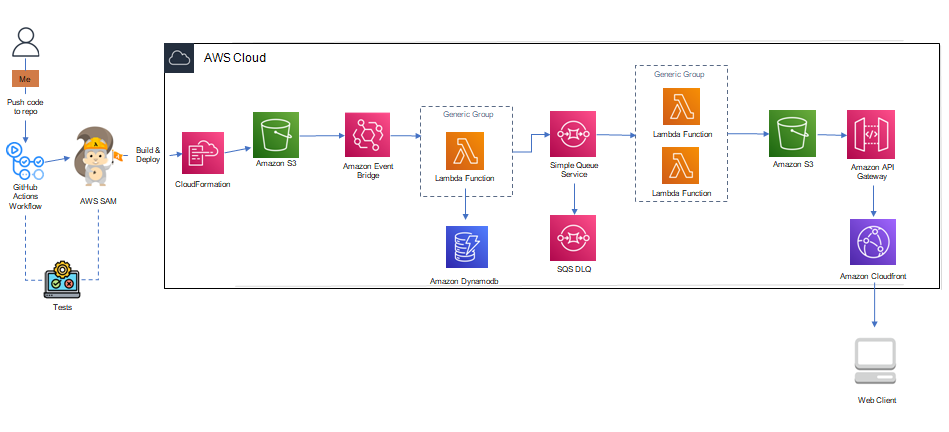

Digital Alpha implemented its serverless web scraping application to scrape weather data from websites for specific zip Codes as required for the business need.

AWS Serverless Application Model (SAM) automates lambda function development. SAM allows users to create a continuous integration and test and debug serverless code locally.

The architecture is flexible, cost-effective, resilient, and allows users to make solid decoupled services.

Services/Components Used:

Services/Components Used:

- Amazon S3 – Stores and protects any amount of data such as cloud-native applications, mobile applications, etc.

- Amazon Event Bridge – Allows to set up a process to periodically run the lambda function.

- AWS Lambda – Enables users to run code without managing servers and scales automatically.

- Amazon SQS – Allows users to decouple and scale serverless applications.

- AWS SAM – Open-source framework to build serverless applications and provides shorthand syntax to express functions, APIs, databases, and event source mappings.

DynamoDB: DynamoDB provides native, server-side support for transactions, simplifying the developer experience of making coordinated, all-or-nothing changes to multiple items both within and across tables. With support for transactions, developers can extend the scale, performance, and enterprise benefits of DynamoDB to a broader set of mission-critical workloads.

Deployment:

AWS Lambda allows users to build a lean, on-demand infrastructure and scales continuously. It gives access to standard libraries, and in addition, users can build their own package to support the execution of the function or use Lambda layers to gain access to external libraries or even external Linux-based programs.

Users can access AWS lambda via the web console to create a new function, update lambda code or execute it.

Results and Benefits

The reinsurance firm now has the capabilities built to streamline and automate data extraction, and there is no manual intervention to identify and fix data when site changes.

Analysts can run this on multiple sites and have the ability to collect and analyze all the damaged data points to propose the likelihood of an event being catastrophic to the decision-makers. Few key advantages for businesses:

- Automating manual processes deliver efficiencies that lower operating costs.

- Speed and accuracy deliver a competitive advantage that increases customer retention.

- Digitizing incoming information unlocks the ability for real-time triage. Automated data extraction enables triage and decision tools to evaluate quotes to make sure underwriters prioritize and optimize opportunities.